A | B | C | D | E | F | G | H | CH | I | J | K | L | M | N | O | P | Q | R | S | T | U | V | W | X | Y | Z | 0 | 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9

In computer science, the time complexity is the computational complexity that describes the amount of computer time it takes to run an algorithm. Time complexity is commonly estimated by counting the number of elementary operations performed by the algorithm, supposing that each elementary operation takes a fixed amount of time to perform. Thus, the amount of time taken and the number of elementary operations performed by the algorithm are taken to be related by a constant factor.

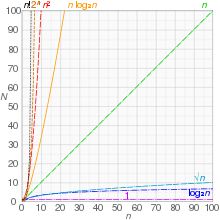

Since an algorithm's running time may vary among different inputs of the same size, one commonly considers the worst-case time complexity, which is the maximum amount of time required for inputs of a given size. Less common, and usually specified explicitly, is the average-case complexity, which is the average of the time taken on inputs of a given size (this makes sense because there are only a finite number of possible inputs of a given size). In both cases, the time complexity is generally expressed as a function of the size of the input.[1]: 226 Since this function is generally difficult to compute exactly, and the running time for small inputs is usually not consequential, one commonly focuses on the behavior of the complexity when the input size increases—that is, the asymptotic behavior of the complexity. Therefore, the time complexity is commonly expressed using big O notation, typically , , , , etc., where n is the size in units of bits needed to represent the input.

Algorithmic complexities are classified according to the type of function appearing in the big O notation. For example, an algorithm with time complexity is a linear time algorithm and an algorithm with time complexity for some constant is a polynomial time algorithm.

Table of common time complexities

The following table summarizes some classes of commonly encountered time complexities. In the table, poly(x) = xO(1), i.e., polynomial in x.

| Name | Complexity class | Running time (T(n)) | Examples of running times | Example algorithms |

|---|---|---|---|---|

| constant time | 10 | Finding the median value in a sorted array of numbers

Calculating (−1)n | ||

| inverse Ackermann time | Amortized time per operation using a disjoint set | |||

| iterated logarithmic time | Distributed coloring of cycles | |||

| log-logarithmic | Amortized time per operation using a bounded priority queue[2] | |||

| logarithmic time | DLOGTIME | , | Binary search | |

| polylogarithmic time | ||||

| fractional power | where | , | Searching in a kd-tree | |

| linear time | n, | Finding the smallest or largest item in an unsorted array, Kadane's algorithm, linear search | ||

| "n log-star n" time | Seidel's polygon triangulation algorithm. | |||

| linearithmic time | , | Fastest possible comparison sort; Fast Fourier transform. | ||

| quasilinear time | ||||

| quadratic time | Bubble sort; Insertion sort; Direct convolution | |||

| cubic time | Naive multiplication of two matrices. Calculating partial correlation. | |||

| polynomial time | P | , | Karmarkar's algorithm for linear programming; AKS primality test[3][4] | |

| quasi-polynomial time | QP | , | Best-known O(log2n)-approximation algorithm for the directed Steiner tree problem. | |

| sub-exponential time (first definition) |

SUBEXP | for all | Contains BPP unless EXPTIME (see below) equals MA.[5] | |

| sub-exponential time (second definition) |

Best-known algorithm for integer factorization; formerly-best algorithm for graph isomorphism | |||

| exponential time (with linear exponent) |

E | , | Solving the traveling salesman problem using dynamic programming | |

| exponential time | EXPTIME | , | Solving matrix chain multiplication via brute-force search | |

| factorial time | Solving the traveling salesman problem via brute-force search | |||

| double exponential time | 2-EXPTIME | Deciding the truth of a given statement in Presburger arithmetic |

Constant time

An algorithm is said to be constant time (also written as time) if the value of [further explanation needed] is bounded by a value that does not depend on the size of the input. For example, accessing any single element in an array takes constant time as only one operation has to be performed to locate it. In a similar manner, finding the minimal value in an array sorted in ascending order; it is the first element. However, finding the minimal value in an unordered array is not a constant time operation as scanning over each element in the array is needed in order to determine the minimal value. Hence it is a linear time operation, taking time. If the number of elements is known in advance and does not change, however, such an algorithm can still be said to run in constant time.

Despite the name "constant time", the running time does not have to be independent of the problem size, but an upper bound for the running time has to be independent of the problem size. For example, the task "exchange the values of a and b if necessary so that " is called constant time even though the time may depend on whether or not it is already true that . However, there is some constant t such that the time required is always at most t.

Here are some examples of code fragments that run in constant time:

int index = 5;

int item = list;

if (condition true) then

perform some operation that runs in constant time

else

perform some other operation that runs in constant time

for i = 1 to 100

for j = 1 to 200

perform some operation that runs in constant time

If is , where a is any constant value, this is equivalent to and stated in standard notation as being .

Logarithmic time

An algorithm is said to take logarithmic time when . Since and are related by a constant multiplier, and such a multiplier is irrelevant to big O classification, the standard usage for logarithmic-time algorithms is regardless of the base of the logarithm appearing in the expression of T.

Algorithms taking logarithmic time are commonly found in operations on binary trees or when using binary search.

An algorithm is considered highly efficient, as the ratio of the number of operations to the size of the input decreases and tends to zero when n increases. An algorithm that must access all elements of its input cannot take logarithmic time, as the time taken for reading an input of size n is of the order of n.

An example of logarithmic time is given by dictionary search. Consider a dictionary D which contains n entries, sorted by alphabetical order. We suppose that, for , one may access the kth entry of the dictionary in a constant time. Let denote this kth entry. Under these hypotheses, the test to see if a word w is in the dictionary may be done in logarithmic time: consider , where denotes the floor function. If , then we are done. Else, if

Text je dostupný za podmienok Creative

Commons Attribution/Share-Alike License 3.0 Unported; prípadne za ďalších

podmienok.

Podrobnejšie informácie nájdete na stránke Podmienky

použitia.